Marat Khamadeev

Marat Khamadeev

Eco4cast: Smart Schedule for Green Learning

Today we are witnessing a rapid growth of opportunities demonstrated by artificial intelligence models. The most notable successes are in language and generative models, such as ChatGPT or Midjourney, as neural networks have already become part of the daily lives of millions of people thanks to them.

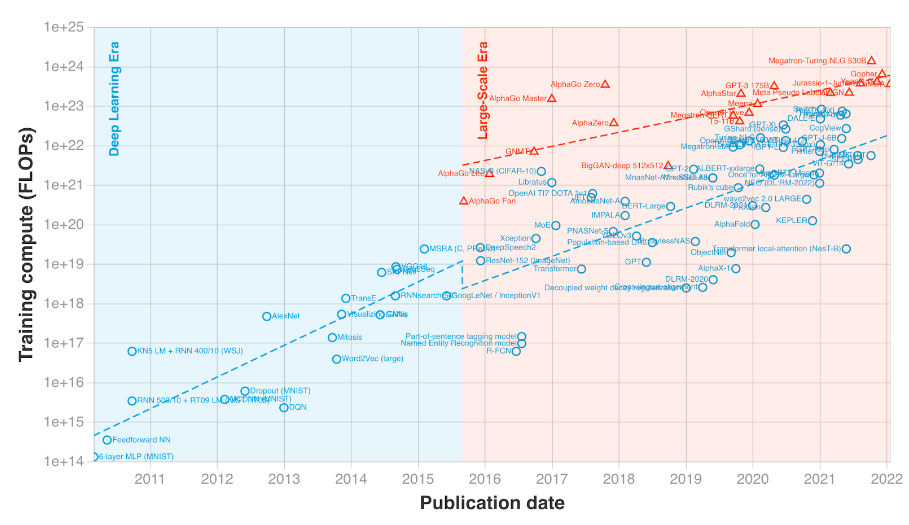

This progress is due to the exponential growth in complexity and size of AI models. Since 2012, these characteristics have been doubling on average every 3-4 months, and today the record size exceeds 174 trillion parameters.

Training compute (FLOPs) of milestone Machine Learning systems over time. Source: Jaime Sevilla et al., arXiv.2202.05924

But there is a downside to such progress. The computations on central and graphic processors consume large amounts of energy which ultimately leads to notable contribution to the carbon footprint. Managing the training of such models from an ecological contribution perspective, i.e. minimizing electricity consumption and the equivalent CO2 emissions, becomes an important factor in sustainable development.

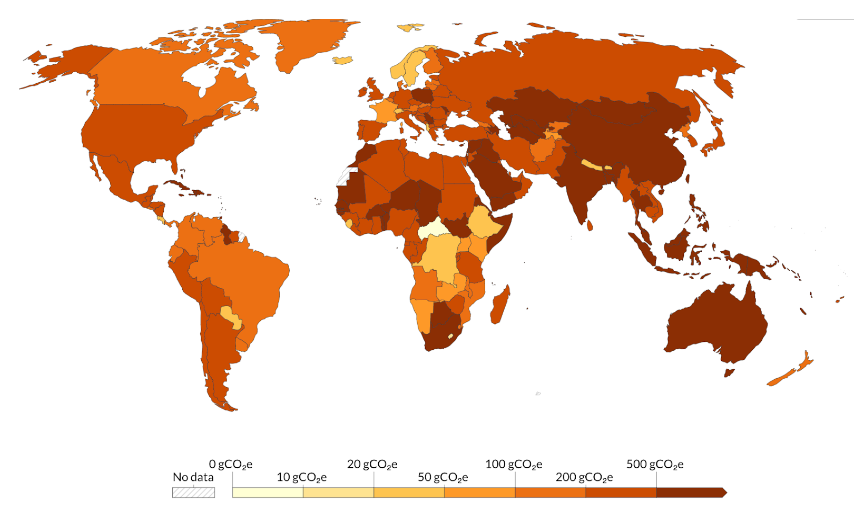

Trying to solve this problem a team of scientists from AIRI and Sber led by Semen Budennyy used the fact that carbon intensity of electricity is subjected to significant daily fluctuations and also varies significantly between regions of the world. This means that it is possible to schedule the training of AI models only for specific periods or in regions with lower carbon intensity to reduce the overall carbon footprint of AI while still achieving the desired performance.

CO2e emission factors by country for 2022. Source: https://ourworldindata.org/grapher/carbon-intensity-electricity

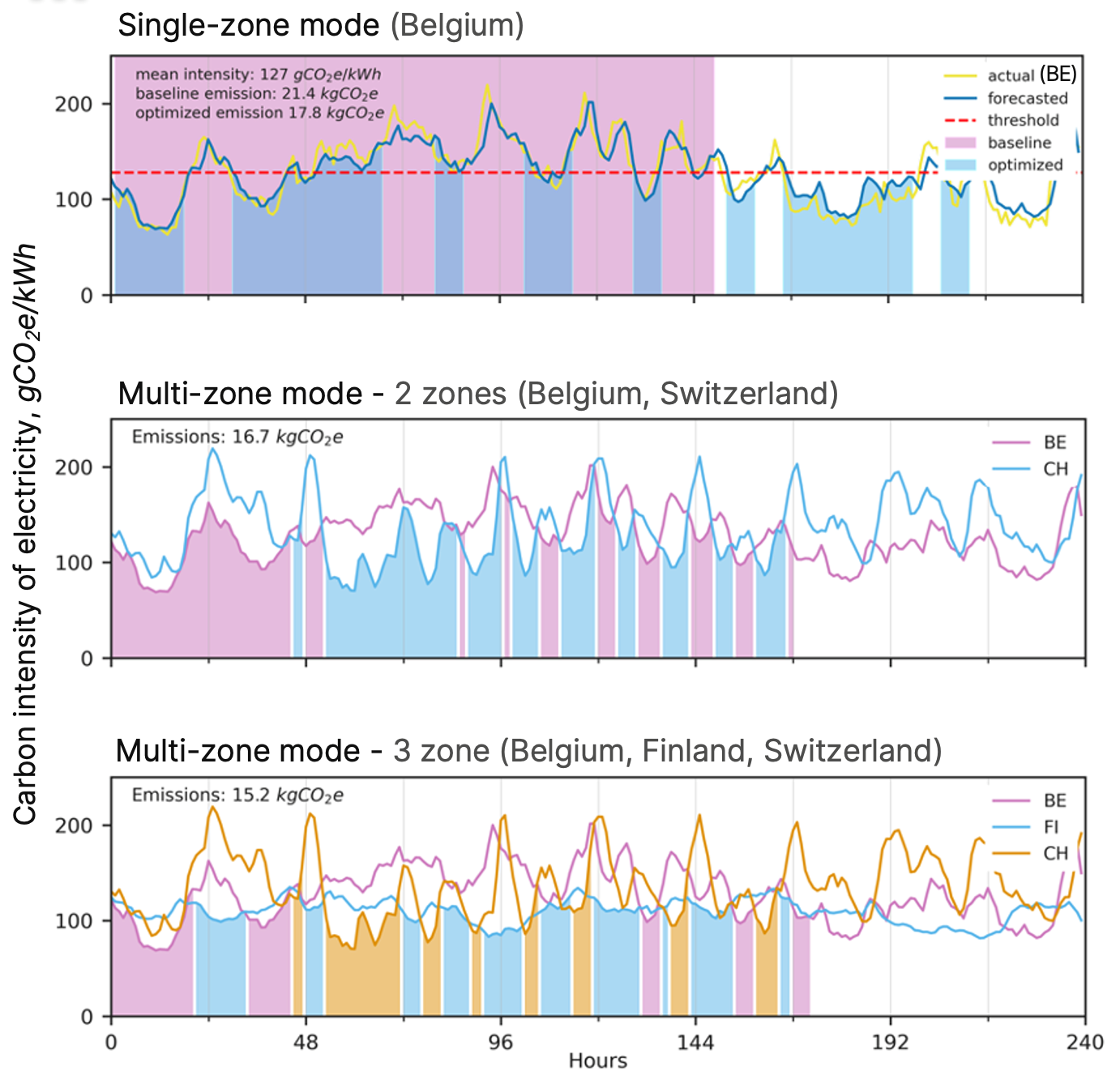

To make it work researchers developed an open-source package named eco4cast. This software dynamically predicts CO2 emission intensity and arranges the computation to time intervals or computational zones with the lowest predicted carbon intensity of electricity. Forecasting is made by a developed neural network analyzing emission data and 20 weather indicators in the regions under consideration. To accurately calculate carbon footprint reduction the eco4cast uses the eco2ai Python package. The scheduler can operate in both single-zone and multi-zone mode and selection of the optimal region for computation is currently implemented by integration with Google Cloud API. Authors decided to include CO2 emission intensity for 13 areas presented in Google Cloud zones.

The single-zone case is well suited for users having access to computing resources with the only provider of electricity. A series of experiments showed that this scenario provides significant reduction of CO2 emissions (up to 70% in some cases, 25% in average) but requires some increase in learning time. At the same time the multi-zone approach provides an optimal trade-off between the training time of an AI model and the reduction of CO2 emissions (up to 90% in some cases, 77% in average).

Demonstration of eco4cast scheduling of ML model training started on 29th of May 2022 for single-zone mode (Belgium) and multi-zone mode (2 zones — Belgium and Switzerland and 3 zones — Belgium, Finland and Switzerland). Filled area indicates time intervals used for training in choosed areas. Indirect emission of CO2 was 21.4 kg in baseline experiment without scheduling, 17.8 kg in single-zones mode, 16.7 kg in multi-zone mode with 2 zones used and 15.2 kg in multi-zone mode with 3 zones used.

The authors hope that eco4cast will become an essential component for enhancing ecological efficiency for training AI models. The code and documentation of the package are hosted on Github under the Apache 2.0 license. The article with the research results has been sent for publication to the journal Doklady Mathematics.